Today is my last day at Activision.

Not quite the end of an era, but my six-year stint at Activision|Blizzard|King has been by far the longest I’ve so far worked for a given company, and I wanted to write something about it.

I don’t usually do things like this, but long gone are the times where I pretended this blog could stay anonymous. Also, I do think that we should really talk more about our experiences with teams and companies, be more open. I’ve never done that on this blog (albeit I’ve always been happy to chat about anything in person), so let me quickly fix that.

This has been my, to be honest quite lucky, video-game career trajectory:

- Milestone (Italy, racing games). The indie company. Here, we were a family. And as most families, often loud and dysfunctional, sometimes fighting, but in the end, for me, it was always fair and always fun. We were pioneers, not because we were necessarily doing state-of-the-art things, but because nobody around us knew better, we had to figure out everything on our own. Eventually, that became a limiting factor for my own growth, but it was great to start there.

- Electronic Arts (Canada, Fight Night team). My team at EAC was probably the best example of a well-organized game studio. Everything was neat, productions went smoothly, and we created some quite kick-ass graphics as well. EA, and probably even more so EA Sports is truly a game developer. And by that I mean that it takes part in the game development, we had shared guidelines, procedures, technologies, resources. Of course, each team could still have plenty of degrees of freedom to custom-fit the EA way to their specific reality, but you always felt part of a bigger ecosystem and had access to this gigantic wealth of knowledge and people across the globe. Fun times!

- Relic (Space Marines, mostly). Relic had much more of the “indie” feel of my Milestone days. Not quite the same, we were a big team in an even bigger studio, part of a publicly traded company, but we were also exploring uncharted territory (for us), with very smart people and lots of last-minute hacking. I’m proud of what we achieved, it was fun and I loved the spirit we had in our rendering/optimization corner of the office. We did perhaps chew a bit more than we could though, doing something unprecedented for the studio. THQ was also failing fast, which didn’t help.

- Capcom (Vancouver). This studio is now closed. It was an unlucky choice for me, our project was riddled with all kinds of problems, in all possible dimensions. Eventually, I was laid off, then the project was canceled, and a few years later, the studio went down. It was a very stressful time, day after day I was quite unhappy. Still, I have to say I met some excellent people and I’m glad that I now know them!

- And now, Activision.

It’s the people.

One of the company mottos is “it’s the people”. I didn’t particularly think that these “values”, that all companies put forward, were particularly received in Activision’s case, at least when it came to Central Technology I always felt we didn’t pay much attention. But for me, it was the people, first and foremost.

Activision is the place where I found the smartest people around me, by far. And I’ve worked with very smart people, in great companies, but nothing touches this.

Now, I have to say, I have also a unique, very biased vantage point. Being a technical director in central technology means to interface mostly with the most senior technical people and the studio leadership. I was not in production and not working with a single studio. A different ballgame.

But still, I can’t even make list here! Ok, to give you a picture. ACME: Michael, Wade, Paul, Aki, I don’t think I can in a few words express the brilliance of these individuals. Paul’s technical abilities are unbeatable. He knows everything and can do anything. Wade is worse, infuriatingly smart, and any time I find the tiniest flaw in Michael’s life I have a sigh of relief (my girlfriend says he’s my work husband, even if I do have several man crushes to be honest, yes, there is a list). Aki started as an intern and was recently hired full time. I think he is already a better programmer than I am, and I definitely do not suffer from impostor’s syndrome...

Peter-Pike Sloan’s research team is the best R&D team I’ve ever seen, with people like Michal Iwanicki, Josiah Manson, Ari... But then again, I literally can’t make a list and I’m talking only about the rendering people, no actually, the rendering people I know best, even! My home team at Radical, which was a great studio in its own right, has been fantastic, Josh, Ryan, Tom, Peter, Andrew. CTN and that shadertoy genius of Paul Malin. And then the game teams. I mean, you can’t beat Drobot, can you? Jorge Jimenez! Dimitar Lazarov, Danny Chan, these are people who every project decide to completely change how Call of Duty renders things. Because why not, right? And of course, the people above me, Christer, whom I admired way before he landed at Activision but also Dave Cowling who hired me, and Andy our CTO who came to speak to us a year before he got hired, and even back then I thought he was an incredibly smart guy. And that's to speak just of the people in the Call of Duty orbit...

Truth is, if you know, you already know. If you don’t, it’s hard for me to tell you, so let’s just cut it short. Amazing people.

Not just smart.

Ok, so we have smart people, blah blah. What gives, are you just showing off or is there a point to this? There is actually.

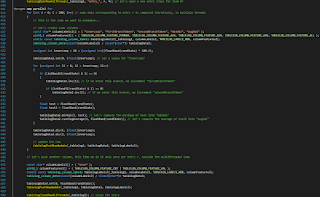

And the lesson is not even “how to hire smart people” or that you should hire smart people. To be honest, I don’t think we have a way, even if Christer (and myself even) did put a lot of time and effort thinking about the process, you can only affect some multipliers I think. Mostly, teams seem to me to build up from the gravitational pull, around a given company culture. Once you have a given number of people that value given things, they tend to grab other people who also share the same core values, even if you want to have different points of view.

No, what Activision really taught me is that smart people don’t really matter, per se. Brilliance alone doesn’t mean that good things will happen to your products, actually, it can be dangerous because it can correlate with ego, especially if you haven’t reached the highest level of enlightenment yet.

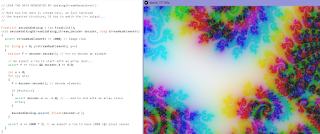

What’s interesting about this particular bunch of smart people, is that they are also what actors call “grounded”. There is little bullshit going around. Tech is not made for tech’s sake. We don’t even have an engine, in a time where even if you really just have a game, one game, one codebase, you would call silly codenames each library and each little bit of tech, and maybe put some big splash screens before your main titles. Made with.. xyz.

This is actually a lesson that originates from the early days of Call of Duty at IW. From what I’m told, IW never named their engine, because any time you name something it becomes a bit more of a thing, and you start working for it. And they were a studio making games, the game is the thing. Nothing else.

This meshes just so well with the Activision way. So many people taught me so many lessons here, but the kind of fil rouge I found is this attention to what matters, when especially for us, rendering people, is so annoyingly easy to get distracted, quite literally, by the cool shiny thing that’s in the hype at the moment.

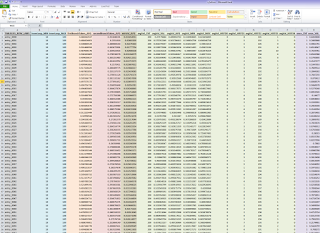

You might even call it ROI, I sometimes do even if perhaps I should not, it sounds “bad”. But you have to be aware of what matters, what are the trade-offs, what should you spend your time on. Which doesn’t mean you are a drone doing complex math in Excel, quite the contrary, there is definitely space for doing things because you want to, and you like to.

Thing is, we cannot really compute the ROI of our tech stuff, especially for a thing that is so far from the product sales as rendering code is. But, we can be aware of these things. Even just thinking about them a bit makes a big difference.

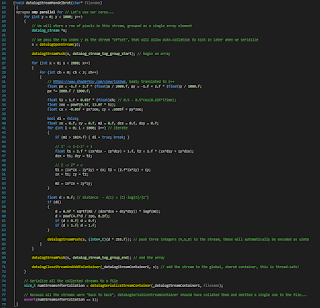

Peter-Pike could be another example. I said I’ve never seen a better R&D team. Does it mean that there aren’t other teams that do research as good, or maybe even “better” sometimes? Of course, there are! The difference though is impact. Not only almost all our R&D work shipped on the given year’s title, but it’s also focused on what matters. Either help ship the title, technology that is needed to do what they want to do, or help the teams work better, technology to help productivity. Often both.

And this then again reflects also in the people. Yes, Peter-Pike is an accomplished academic, and his team does real research, meaning, things we don’t know how or even if we will solve. But he’s also incredibly pragmatic. He is hands-on coding all the times. More than I am. Better than I am!

Activision and Electronic Arts...

...couldn’t be more different, contrary to the popular belief that lumps all the three-four big publishers into the same AAA bucket.

And that is also what was interesting and life-changing. You see, I truly love them both. They are great in their own right and the results speak for themselves. They both have great people, I don’t even need to tell you that.

But they work so differently.

EA as I said, feels like a big game developer, a community. Sharing is one of the key values, finding the best ways of doing things, leveraging their size. It sounds very reasonable, and it is.

But Activision is almost the opposite. It feels like a publisher, who owns a number of internal studios. The studios, of course, are accountable to the publisher, but they are independent, that is key, core value. Central technology is not there to tell people how to do things, but as a publisher-side resource to help if possible. The teams are incredibly strong on their own, even in terms of R&D. And, at least from my vantage point, they get to call all their own shots. Which is again very reasonable, if you tell people they are accountable they should definitely have the freedom of choice too.

And yet again. Valuing independence versus valuing a shared community, the opposite viewpoints end up in practice creating results that are not that dissimilar. How comes?

If you’ve been doing this for a while, it won’t be even surprising. The key is that we don’t really know what’s best. Companies and teams even technologies and products organize around given values, a cultural environment that was created probably when there were almost a handful of employees. And once you have these the truth is you can most often structure everything else around in a way that makes sense, that works.

We see this so often in code too. A handful of key decisions are made because of legacy or opinions. We’re going to be a deferred renderer. Well, now certain things are harder, others are easier. Certain stuff needs to happen early in the frame, other late, and you have certain bottlenecks and idle times, and you work from there, find smart ways to put work where the GPU is free, and shift techniques to remove bottlenecks and so on.

Which doesn't mean everything is ever perfect, mind you. We always have pain points and room for improvement, and different strategies yield different issues. To a degree, this is even lucky! I don't make games, I help people and technology. The day Unity will solve all technical and organizational problems for game making is the day I'll be out of a job. At least in this industry...

Ok, then why?