Every now and then people ask me for recommendations on 3d engines for their projects. Honestly I'm not such an expert on the topic, I've always written or used in-house solutions, so my knowledge about free middleware is pretty thin.

On top of that, it does not help that most of the engines I've checked out looked terrible to me. You would expect people trying new and cool ideas in the opensource world, where you're not tied to deadlines and products.

You'll be wrong or at least, I've never seen anything interesting or new among the thousands of engines sources you can find on the net. Most of the times, they follow the same pattern, they are textbook engines.

They are all scenegraph based. They don't really care about caches or threads. They don't care about iteration time or ergonomy. They support all the wrong formats (i.e. the horrible Collada XML) and they're all busy implementing every "technique" out there. They go against everything I advocate on this blog :D

But enough of this. Here it comes my list of notable opensource 3d engines out there:

Ogre3D. This is perhaps the most famous one. The good thing is that there are some commercial products using it, most of them are not graphically intensive, but still, a good sign.

The community is huge, the documentation is huge, you'll expect this engine to be well tested. Also, there are bindings for any language out there, and even a C# native port (

Axiom).

The architecture does not look very interesting, the engine is big and complicated, but it focuses more in getting a lot of things done than solving the fundamental problems that a 3d engine should address. For example, it has little support for multithreading, just some locks and they seem to be not too tested as well.

It's packed with a lot of ready made stuff, from terrain to shadows, to LODs, particles, various culling methods and so on. All that I can say it that the quality of those black boxes varies at best though. A few years ago I remember looking into its lispsm shadows only to find it seriously broken.

Nebula (

UPDATE: last? version in the comments

here, Radon labs is no more,

Nebula 2 could be interesting too, Nebula 3 is a rewrite -

latest version,

unofficial community?): This is actually the only opensource 3d engine that I really like among the ones I've looked. It's made by the game company

Radon Labs, that offers it to the public.

Focuses on a lot of the right things, cares about the general infrastructure more than specific features. Also it has been used on commercial titles, with great results. Radon Labs ships titles for all the consoles, but obviously the opensource engine can't include parts of the 360 or Ps3 devkits. That said, their experience outside the PC realm, where you can push polygons for free, without needing to care much about performances or good coding practice (if you're not shipping an AAA title, that is) is a bless!

Recommended, even if the community does not seem to be huge or too active, it seems to be mostly carried over by Radon alone. That means that it's maybe better not to jump on it if you're a total beginner that does not want to learn from the source...

OpenSceneGraph: This one is interesting, it focuses on performance and multithreading, even if it's OpenGL only and it focuses a lot on portability, it's more a visualization engine than a game one, somewhat like NVidia

Scenix or the old OpenGL Performer.

Here you can find its

mission, and here its

forum and development

blog. Obviously it's scenegraph based and as I said, it's looks like it's an opensource replacement of the dead Performer or the never-born project Fahrenheit. So overall I won't use it and you need to keep in mind what's its purpose, but overall it has with lots of things going on, a good community and a clear design. There is even a nodekit for postprocessing, and some extensions to use Cuda and OpenCL.

Same really goes for

OpenSG, both are worth a look but I won't recommend either for games or as a guide to design a 3d engine.

Panda3d: Another engine I don't know much about. But it has shipped titles, and from what I can see, I would prefer this as an alternative to OGRE.

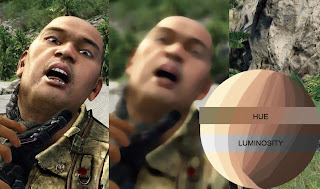

Sauerbraten: The guy behind this engine/game (

Wouter van Oortmerssen) is a genius, he implemented more programming languages than the ones I know, and he worked on Far Cry! Sauerbraten is a very fast 3d engine, to the point that it has even been successfully ported to the iPhone. Surely interesting, even if it's very specialized in what it does, it's worth a look.

Quake 3: It's old, it's very specific, and it's not actively supported. But it's a serious commercial engine, and it was made by ID. That's more than enough.

Other worth mentioning.

Horde3d: I don't know much about this one, but some of its features sound right. From what I can tell, there's no title shipped with this one, and moreover it's OpenGL only at the moment, that is a bad sign.

Oolong engine: iPhone only.

Quelsolaar stuff: Ideas that are all over the place. Most of them are nice, some are more toys than productive environments, anyway, worth a look.

After all this I'd still say that if you are looking at an engine to learn from, take most of those cum grano salis. On the other hand, if you just want a platform to quickly develop a game, maybe you should pay the small price that comes with

Unity, or try the

UDK. Or simply, stick

with 2D...