Search this blog

15 November, 2015

Lecture slides for University of Victoria

An introduction to what is like to be a rendering engineer or researcher in a AAA gamedev production, and why you might like it. Written for a guest lecture to computer graphics undergrads at University of Victoria.

As most people don't love when I put things on scribd, for now this is hosted from my dropbox.

15 August, 2015

Siggraph 2015 course

As some will have noticed, Michal Iwanicki and I were speakers (well I was much more of a listener, actually) in the physically based shading course this year (thanks again to both Stephens for organizing and invitingus) presenting a talk on approximate models for rendering, while our Activision colleague and rendering lead of Sledgehammer showed some of his studio's work on real world measurements used in Advanced Warfare.

|

| Before and after :) |

If you weren't at Siggraph this year or you missed the course, fear not, we'll publish the course notes soon (I need to do some proof reading and adding bibliography) and the course notes are "the real deal", as in twenty minutes we couldn't do much more than a teaser trailer on stage.

I wanted to write though about the reasons that motivated me to present in the course that material, give some background. Creating approximations might be time consuming sometimes, but it's often not that tricky, per-se I don't think it's the most exciting topic to talk about.

But it is important, and it is important because we are still too often too wrong. Too many times we use models that we don't completely understand, that are exact under assumptions we didn't investigate and for which we don't know what perceptual error they cause.

You can nowadays point your finger at any random real time rendering technique, really look at it closely, compare with ground truth, and you're more likely than not to find fundamental flaws and simple improvements through approximation.

This is a very painful process, but necessary. PBR is like VR, it's an all or nothing technique. You can't just use GGX and call it a day. Your art has to be (perceptually) precise, your shadows, your GI, your post effect, there is a point where everything "snaps" and things just look real, but it's very easy to be just a bit off and ruin the illusion.

Worse, errors propagate non-locally as artists try to compensate for our mistakes in the rendering pipeline by skewing the assets to try to reach as best as they can a local minimum.

Moreover, we are also... not helped I fear by the fact that some of these errors are only ours, we commit them in application, but the theory in many cases is clear. We often got research from the seventies and the eighties that we should just read more carefully.

For decades in computer graphics we rendered images in gamma space, but there isn't anything for a researcher to publish about linear spaces, and even today we largely ignore what colorspaces really are and what we should use, for example.

We don't challenge the assumptions we work with.

A second issue I think is sometimes it's just neater to work with assumptions that it is to work on approximations. And it is preferable to derive our math exactly via algebraic simplifications, the problem is that when we simplify by imposing an assumption, its effects should be measured precisely.

If we consider constant illumination, and no bounces, we can define ambient occlusion, and it might be an interesting tool. But in reality it doesn't exist, so when is it a reasonable approximation? Then things don't exactly work great, so we tweak the concept and create ambient obscurance, which is better, but to a degree even more arbitrary. Of course this is just an example, but note: we always knew that AO is something odd and arbitrary, it's not a secret, but even in this simple case we don't really know how wrong it is, when it's more wrong, and what could be done to make it measurably better.

You might say that even just finding the errors we are making today and what is needed to bridge the gap, make that final step that separates nice images from actual photorealism, is a non-trivial open problem (*).

It's actually much easier to implement a many exciting new rendering features in an engine than to make sure that we got even a very simple and basic renderer is (again perceptually) right. And on the other hand if your goal is photorealism it's surely better to have a very constrained renderer in very constrained environments that is more accurate than a much fancier one used with less care.

I was particularly happy at this Siggraph to see that more and more we are aware of the importance of acquired data and ground truth simulations, the importance of being "correct", and there are many researchers working to tackle these problems that might seem even to a degree less sexy than others, but are really important.

In particular right after our presentations Brent Burley showed, yet again, a perfect mix of empirical observations, data modelling and analytic approximations in his new version of Disney's BRDF, and Luca Fascione did a better job I could ever do explaining the importance of knowing your domain, knowing your errors, and the continuum of PBR evolution in the industry.

P.S. If you want to start your dive into Siggraph content right, start with Alex "Statix" Evans amazingly presentation in the Advances course: cutting edge technology presented through a journey of different prototypes and ideas.

Incredibly inspiring, I think also because the technical details were sometimes blurred just enough to let your own imagination run wild (read: I'm not smart enough to understand all the stuff... -sadface-).

Really happy also to see many teams share insights "early" this year, before their games ship, we really are a great community.

P.S. after the course notes you might get a better sense of why I wrote some of my past posts like:

- Area lights. No bare bulb point lights.

- Scientific Python 101 and Mathematica 101.

- Cube map errors, and part two.

- Visualitazion.

(*) I loved the open problems course, I think we need it each year and we need to think more at what we really need to solve. This is can be a great communication channel between the industry, the hardware manufactures and the academia. Chose wisely...

04 July, 2015

The following provides no answers, just doubts.

Technical debt, software rot, programming practices, sharing and reuse etcetera. Many words have been written on software engineering, just today I was reading a blog post which triggered this one.

Software does tend to become impervious to change and harder to understand as it ages and increases in complexity, that much is universally agreed on, and in general it's understood that malleability is one key measure of code and practices that improve or retain it are often sensible.

But when does code stop to be an asset and starts being a liability? For example when should we invest on a rewrite?

Most people seem to be divided in camps on these topics, at least in my experience I’ve often seen arguments and entire teams even run on one conviction or another, either aggressively throwing away code to maintain quality or never allowing rewrites to capitalize on investments made in debugging, optimization and so on.

Smarter people might tell you that different decisions are adeguate for different situations. Not all code needs to be malleable, as we stratify certain layers become more complex but also require less change, new layers are the ones that we actively iterate upon and need more speed.

Certainly this position has lots of merit, and it can be extended to the full production stack I’d say, including the tools we use, the operating systems we use and such things.

Such position just makes our evaluation more nuanced and reasonable, but it doesn’t really answer many questions. What is the acceptable level of stiffness in a given codebase? Is there a measure, who do we ask? It might be tempting just to look at the rate of change, where do we usually put more effort, but most of these things are exposed to a number of biases.

For example I usually tend to use certain systems and avoid others based on what makes my life easier when solving a problem. That doesn’t mean that I use the best systems for a given problem, that I wouldn’t like to try different solutions and that these wouldn’t be better for the end product.

Simply though, as I know they would take more effort I might think they are not worth pursuing. An observer, looking at this workflow would infer that the systems I don’t use don’t need much flexibility, but on the contrary I might not be using them exactly because they are too inflexible.

In time, with experience, I’ve started to believe that all these questions are hard for a reason, they fundamentally involve people.

As an engineer, or rather a scientist, one grows with the ideal of simple formula to explain complex phenomena, but people behaviour still seems to elude such simplifications.

Like cheap management books (are there any other?) you might get certain simple list of rules that do make a lot of sense, but are really just arbitrary rules that happened to work for someone (in the best case, very specific tools, worst just crap that seems reasonable enough but has no basis), they gain momentum until people realize they don’t really work that well and someone else comes up with a different, but equally arbitrary set of new rules and best practices.

Never they are backed by real, scientific data.

Never they are backed by real, scientific data.

In reality your people matters more than any rule, the practices of a given successful team don’t transfer to other teams, often I’ve seen different teams making even similar products successfully, using radically different methodologies, and viceversa teams using the same methodologies in the same company managing to achieve radically different results.

Catering to a given team culture is fundamental, what works for a relatively small team of seniors won’t apply to a team for example with much higher turnover of junior engineers.

Failure often comes from people who grew in given environments with given methodologies adapted to the culture of a certain team, and as that was successful once try to apply the same to other contexts where they are not appropriate.

In many ways it’s interesting, working with people encourages real immersion into an environment and reasoning, observing and experimenting what specific problems and specific solutions one can find, rather than trying to apply a rulebook.

In some others I still believe it’s impossibile to shut that nagging feeling that we should be more scientific, that if medicine manages to work with best practices based on statistics so can any other field. I've never seen so far big attempts at making software development a science, deployed in a production environment.

Maybe I'm wrong and there is an universal best way of working, for everyone. Maybe certain things that are considered universal today, really aren't. It wouldn't be surprising as these kinds of paradigm seem to happen in the history of other scientific fields.

Maybe I'm wrong and there is an universal best way of working, for everyone. Maybe certain things that are considered universal today, really aren't. It wouldn't be surprising as these kinds of paradigm seem to happen in the history of other scientific fields.

Interestingly we often fill questionaries to gather subjective opinions about many things, from meeting to overall job satisfaction, but never (in my experience) on code we write or the way we make it, time spent where, bugs found where and so on...

I find amusing to observe how code and computer science is used to create marvels of technological progress, incredible products and tools that improve people’s lives, and that are scientifically designed to do so, yet often the way these are made is quite arbitrary, messy and unproductive.

And that also means that more often than not we use and appreciate certain tools we use to make our products but we can’t dare to think how they really work internally, or how they were made, because if we knew or focused on that, we would be quite horrified.

P.S.

Software science does exist, in many forms, and is almost as old as software development itself, we do have publications, studies, metrics and even certain tools. But still, in production, software development seems more art than science.

P.S.

Software science does exist, in many forms, and is almost as old as software development itself, we do have publications, studies, metrics and even certain tools. But still, in production, software development seems more art than science.

26 April, 2015

Sharing is caring

Knowledge > Code.

Code is cheap, code needs to be simple. Knowledge is expensive, so it makes lots of sense to share it. But, how do we share knowledge in our industry?

Nearly all you really see today is the following: a product ships, people wrap it up, and some good souls start writing presentations and notes which are then shared either internally or externally in conferences, papers and blogs.

This is convenient both because at the end of production people have more time on their hands for such activities, and because it's easier to get company approval for sharing externally after the product shipped.

What I want to show here are some alternative modalities and what they can be used for.

Showing without telling.

Nowadays an increasingly lost art, bragging has been the foundation of the demo-scene. Showing that something is possible, teasing others into a competition can be quite powerful.

|

| The infamous fountain part from Stash/TBL |

One of the worst conditions we sometimes put ourselves into is to stop imagining that things are possible. It's a curse that comes especially as a side-effect of experience, we become better at doing a certain thing consistently and predictably, but it can come at the cost of not daring trying crazy ideas.

Just knowing that someone did somehow achieve a given effect can be very powerful, it unlocks our minds from being stuck into negative thinking.

I always use as an example Crytek's SSAO in the first Crysis title, which was brought to my attention by an artist with a great "eye" while playing the game in the company I was working at the time. I immediately started thinking how realtime AO was possible, and the same day I quickly created a shader by modifying code from relief mapping which came close to what it was the actual technique (albeit as you can imagine much slower as it was actually ray marching the z-Buffer).

This is particularly useful if we want to incentive others into coming up with different solutions, engage their minds. It's also easy: it can be done early, it doesn't take much work and it doesn't come with the same IP issues as sharing your solution.

Open problems.

If you have some experience in this field, you have to assume we are still making lots of large mistakes. Year after year we learned that our colors were all wrong (2007: the importance of being linear), that our normals didn't mean what we thought (2008: care and feeding of normal vectors) and that they didn't mipmap the way we did (2010: lean mapping), that area lights are fundamental, that specular highlights have a tail and so on...

Actually you should know of many errors you are making right now even, probably some that are known but you were too lazy to fix, some you know you are handwaving away without strong error bounds, and many more you don't suspect yet; The rendering equation is beautifully hard to solve.

|

| The rendering equation |

We can't fix a problem we don't know is there, and I'm sure a lot of people have found valuable problems in their work that the rest of our community have overlooked. Yet it's lucky if we find an honest account of open problems as further research suggestions at the end of a publication.

Sharing problems is again a great way of creating discussion, engaging minds, and again easier to do than sharing full solutions, but even internally in a company it's hard to find such examples, people underestimate the importance of this information and sometimes our egos come into play even subconsciously we think we have to find a solution ourselves before we can present.

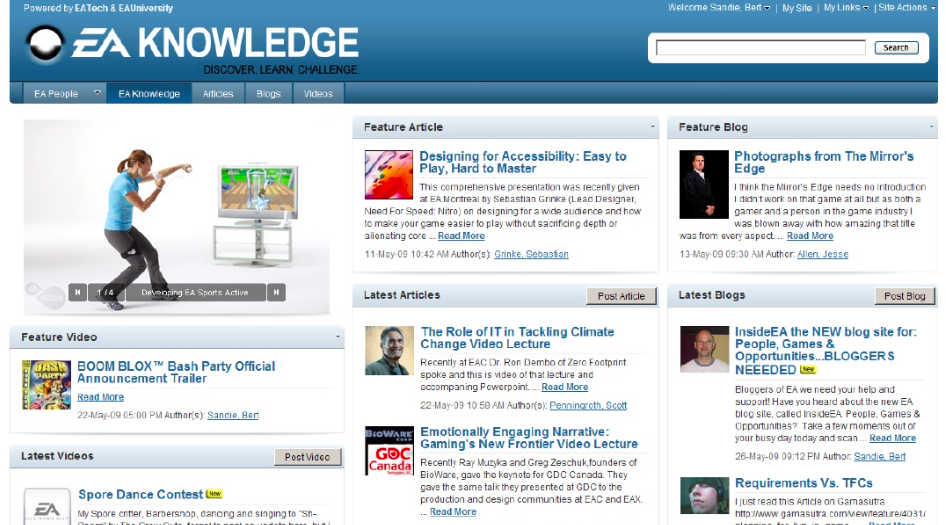

Hilbert knew better. Johan Andersson did something along these lines for the realtime rendering community, but even if EA has easily the best infrastructure and dedication to knowledge sharing I've ever seen discussion about open problems was uncommon (at least in my experience).

Establishing a new sharing pattern is hard, requires active dedication before it becomes part of a culture, and has to be rewarded.

The journey is the destination.

It's truly silly to explore an unknown landscape and mark only a single point of interest. We would map the entire journey, noting intersections, roads we didn't take, and ones we took and had to backtrack from. Even algorithms know better.

Hoarding information is cheap and useful, keeping notes as one works is something that in various forms everybody does, the only thing that is needed is to be mindful in saving progress through times, snapshots.

The main hurdle we face is really ego and expectations, I think. I've seen many people having problems sharing, even internally, work that is not yet "perfect" or thinking that certain information is not "worth" presenting.

The main hurdle we face is really ego and expectations, I think. I've seen many people having problems sharing, even internally, work that is not yet "perfect" or thinking that certain information is not "worth" presenting.

|

| Artists commonly share WIP. Michelangelo's unfinished sculptures are fascinating. |

Few people share work-in-progress of technical ideas in scientific fields, even when we do share information on the finished product, it's just not something we are used to see.

Internally it's easy and wise to share work-in-progress, and you really want people's ideas to come to you early in your work, not after you already wrote thousands of lines of code, just to find someone had a smarter solution or worse, already had code for the same thing, or was working at it at the same time.

Internally it's easy and wise to share work-in-progress, and you really want people's ideas to come to you early in your work, not after you already wrote thousands of lines of code, just to find someone had a smarter solution or worse, already had code for the same thing, or was working at it at the same time.

Externally it's still great to tell about the project's history, what hurdles were found, what things were left unexplored. Often reading papers, with some experience, one can get the impression that certain things were needed to circumvent untold issues of what would otherwise seem to be more straightforward solutions.

Is it wise to let people wonder about these things, potentially exploring avenues that were already be found to not be productive? And on the other side sometimes documenting these avenues explicitly might make others have ideas on how to surpass a given hurdle in a different way. Also consider different people have different objectives and tradeoffs...

The value of failure.

If you do research, you fail. That is almost the definition of research work (and the reason for fast iteration), if you're not allowed to fail you're in production, not inventing something new. The important thing is to learn, and thus as we are learning, we can share.

Vice-versa, if your ideas are always or often great and useful, then probably you're not pushing yourself hard enough (not that it is necessarily a bad thing - often we have to do the things that we exactly know how to do, but that's not research).

When doing research do we spend most time implementing good solutions or dealing with mistakes? Failing is important, it means we are taking risks, exploring off the beaten path, it should be rewarded, but that doesn't mean there is an incredible value for people to encounter the same pitfalls over and over again.

Yet, failures don't have any space in our discussions. We hide them, as having found that a path is not viable is not a great information to share.

Even worse really, most ideas are not really entirely "bad". They might not work right now, or in the precise context they were formulated, but often we have failures on ideas that were truly worth exploring, and didn't pan out just because of some ephemeral contingencies.

Moreover this is again a kind of sharing that can be "easier", usually a company legal department has to be involved when we share our findings from shipped products, but fewer people would object if we talk about things that never shipped and never even -worked-.

Lastly, even when we communicate about things that actually do work, we should always document failure cases and downsides. This is really a no-brainer, it should be a requirement in any serious publication, it's just dishonest not to do so, and nothing is worst than having to implement a technique just to find all its issues that the author did not care to document.

P.S. Eric Haines a few days ago shared his view on sharing code as part of research projects. I couldn't agree more, so I'll link it here.

The only remark I'd like to add is that while I agree that code doesn't even need to be easy to build to be useful, it is something that should be given priority to if possible.

Having code that is easy to build is better than having pretty code, or even code that builds "prettily". Be extremely pragmatic.

I don't care if I have to manually edit some directories or download specific versions of libraries in specific directories, but I do hate if your "clean" build system wants me to install N binary dependencies just to spit a simple Visual Studio .sln you could have provided to begin with, because it means I probably won't have the patience to look at it...

28 March, 2015

Being more wrong: Parallax corrected environment maps

Introduction

A follow-up to my article on how wrong we do environment map lighting, or how to get researchers excited and engineers depressed.

Here I'll have a look at the errors we incur when we want to adopt "parallax corrected" (a.k.a. "localized" or "proxy geometry") pre-filtered cube-map probes, a technique so very popular nowadays.

Here I'll have a look at the errors we incur when we want to adopt "parallax corrected" (a.k.a. "localized" or "proxy geometry") pre-filtered cube-map probes, a technique so very popular nowadays.

I won't explain the base technique here, for that please refer to the following articles:

- Sebastien Lagarde in GPU Pro 4 and his presentation at Siggraph 2012 were very influential

- Approximating ray-tracing on the GPU with distance impostors is an earlier, closely related technique.

- Going even further back in time Brennan from AMD, following ideas from Apodaca, suggested to intersect reflection with a bounding sphere, but in a fast approximated way. For par condicio, here is a similar article by NVidia, surely the same ideas have been "rediscovered" many times by different people.

- See the STAR on Specular Effects on the GPU for a wider overview.

Errors, errors everywhere...

All these are in -addition- to the errors we commit when using the standard cubemap-based specular lighting.

1) Pre-filter shape

Let's imagine we're in an empty rectangular room, with diffuse walls. In this case the cubemap can be made to accurately represent radiance from the room.

We want to prefilter the cubemap to be able to query irradiance in a fast way. What shape does the filter kernel have?

- The cubemap is not at infinite distance anymore -> the filter doesn't depend only on angles!

- We have to look at how the BRDF lobe "hits" the walls, and that depends on many dimensions (view vector, normal, surface position, surface parameters)

- Even in the easy case where we assume the BRDF lobe to be circularly symmetric around the reflection, and we consider the reflection to hit a wall perpendicularly, the footprint won't be exactly identical to one computed only on angles.

- More worryingly, that case won't actually happen often, the BRDF will often hit a wall, or many walls, at an angle, creating an anisotropic footprint!

- Pre-filtering "from the center", using angles, will skew the filter size near the cube vertices, but unlike infinite cubemaps, this is not exactly justified in this case, it optimizes for a single given point of view (query position)

- Moreover! This is not radiance emitted from some magic infinitely distant environment. If we consider geometry, even through a proxy, we should then consider how that geometry emits radiance. Which is a 2D function (spherical). So we should bake a 4D representation, e.g. a cube map of spherical harmonic coefficients...

It doesn't have a direct, one-to-one relationship with the material roughness... We can try, knowing we have a prefiltered cube, to approximate what fetch or fetches best approximate the actual BRDF footprint on the proxy geometry.

This problem can be seen also from a different point of view:

- Let's assume we have a perfectly prefiltered cube for a given surface location in space (query point or "point of view").

- Let's compute a new cubemap for a different point in space, by re-projecting the information in the first cubemap to the new point of view via the proxy geometry (or even the actual geometry for what matters...).

- Let's imagine the filter kernel we applied at a given cubemap location in the original pre-filter.

How will it become distorted after the projection we do to obtain the new cubemap? This is the distortion that we need to compensate somehow...

This issue is quite apparent with rougher objects near the proxy geometry, it results in a reflection that looks sharper, less rough than it should be, usually as we underfilter compared to the actual footprint.

A common "solution" is to not use parallax projection as the surfaces get rougher, which creates lighting errors.

|

| I made this BRDF/plane intersection visualization while working on area lights, the problem with cubemaps is identical |

2) Visibility

In most real-world applications, the geometry we use for the parallax-correction (commonly a box) is doesn't match exactly the real world geometry. Environment with all perfectly rectangular, perfectly empty rooms might be a bit boring.

As soon as we place an object on the ground, its geometry won't be captured by the reflection proxy, and we will be effectively raytracing the reflection past it, thus creating a light leak.

This is really quite a hard problem, light leaks are one of the big issues in rendering, they are immediately noticeable and they "disconnect" objects. Specular reflections in PBR tend to be quite intense, and so it's not easy even to just occlude them away with standard methods like SSAO (and of course considering only occlusion would be per se an error, we are just subtracting light).

An obvious solution to this issue is to just enrich somehow the geometrical representation we have for parallax correction, and this could be done in quite a lot of ways, from having richer analytic geometry to trace against, to using signed distance fields and so on.

All these ideas are neat, and will produce absolutely horrible results. Why? Because of the first problem we analyzed!

The more complex and non-smooth your proxy geometry is, the more problems you'll have pre-filtering it. In general if your proxy is non-convex your BRDF can splat across different surfaces at different distances and will horribly break pre-filtering, resulting in sharp discontinuities on rough materials.

Any solution to this that wants to use non-convex proxies, needs to have a notion of prefiltered visibility, not just irradiance, and the ability of doing multiple fetches (blending them based on the prefiltered visibility)

A common trick to partially solve this issue is to "renormalize" the cube irradiance based on the ratio between the diffuse irradiance at the cube center and the diffuse irradiance at the surface (commonly known via lightmaps).

The idea is that such ratio would express somewhat well how different (due to occlusions/other reflections) how intense the cubemap would be if it was baked from the surface point.

This trick works for rough materials, as the cubemap irradiance gets more "similar" to diffuse irradiance, but it breaks for sharp reflections... Somewhat ironically here the parallax cubemap is "best" with rough reflections, but we saw the opposite is true when it comes to filter footprint...

|

| McGuire's Screen Space Raytracing |

3) Other errors

For completeness, I'll mention here some other relatively "minor" errors:

- Interpolation between reflection probes. We can't have a single probe for the entire environment, likely we'll have many that cover everything. Commonly these are made to overlap a bit and we interpolate while transitioning from one to another. This interpolation is wrong, note that if the two probes reprojected identically at a border between them, we wouldn't need to interpolate to being with...

- These reflection proxies capture only radiance scattered only at a specific direction for each texel. If the scattering is not purely diffuse, you'll have another source of error.

- Baking the scattering itself can be complicated, without a path tracer you risk to "miss" some light due to multiple scattering.

- If you have fog (atmospheric scattering), its influence has to be considered, and it can't really be just pre-baked in the probes correctly (it depends on how much fog the reflection rays traverses, and it's not just attenuation, it will scatter the reflection rays altering the way they hit the proxy)

- Question: what is the best point inside the proxy geometry volume from which to bake the cubemap probe? This is usually hand authored and artists tend to place it as possible away from any object (this could be a heuristic indeed, easy to implement).

- Another way of seeing parallax-corrected probes is to treat think of them really as textured area lights

A common solution to mitigate many issues is to use screen space reflections (especially if you have the performance to do so, fading to baked cubemap proxies only where the SSR doesn't have data to work.

I won't delve into the errors and issues of SSR here, it would be off-topic, but beware of having the two methods represent the same radiance. Even when that's done correctly, the transition between the two techniques can be very noticeable and distracting, it might be better to use one or the other based on location.

|

| From GPU-Based Importance Sampling. |

Conclusions

If you think you are not committing large errors in your PBR pipeline, you didn't look hard enough. You should be aware of many issues, most of them having a real, practical impact and you should assume many more errors exist that you haven't discovered yet.

Do your own tests, compare with real-world, be aware, critical, use "ground truth" simulations.

Remember that in practice artists are good at hiding problems and working around them, often asking to have non-physical adjustment knobs they will use to tuning down/skew certain effects.

Listen to these requests as they probably "hide" a deep problem with your math and assumptions.

Finally, some tips on how to try solve these issues:

- PBR is not free from hacks (not even offline...), there are many things we can't derive analytically.

- The main point of PBR is that now we can reason about physics to do "well motivated" hacks.

- That requires having references and ground truth to compare and tune.

- A good idea for this problem is to write an importance sampled shader that does glossy reflections via many taps (doing the filtering part in realtime, per shaded point, instead of pre-filtering).

- A full raytraced ground truth is also handy, and you don't need to recreate all the features of your runtime engine...

- Experimentation requires fast iteration and a fast and accurate way to evaluate the error against ground truth.

- If you have a way of programmatically computing the error from the realtime solution to the ground truth, you can figure out models with free parameters that can be then numerically optimized (fit) to minimize the error...

21 March, 2015

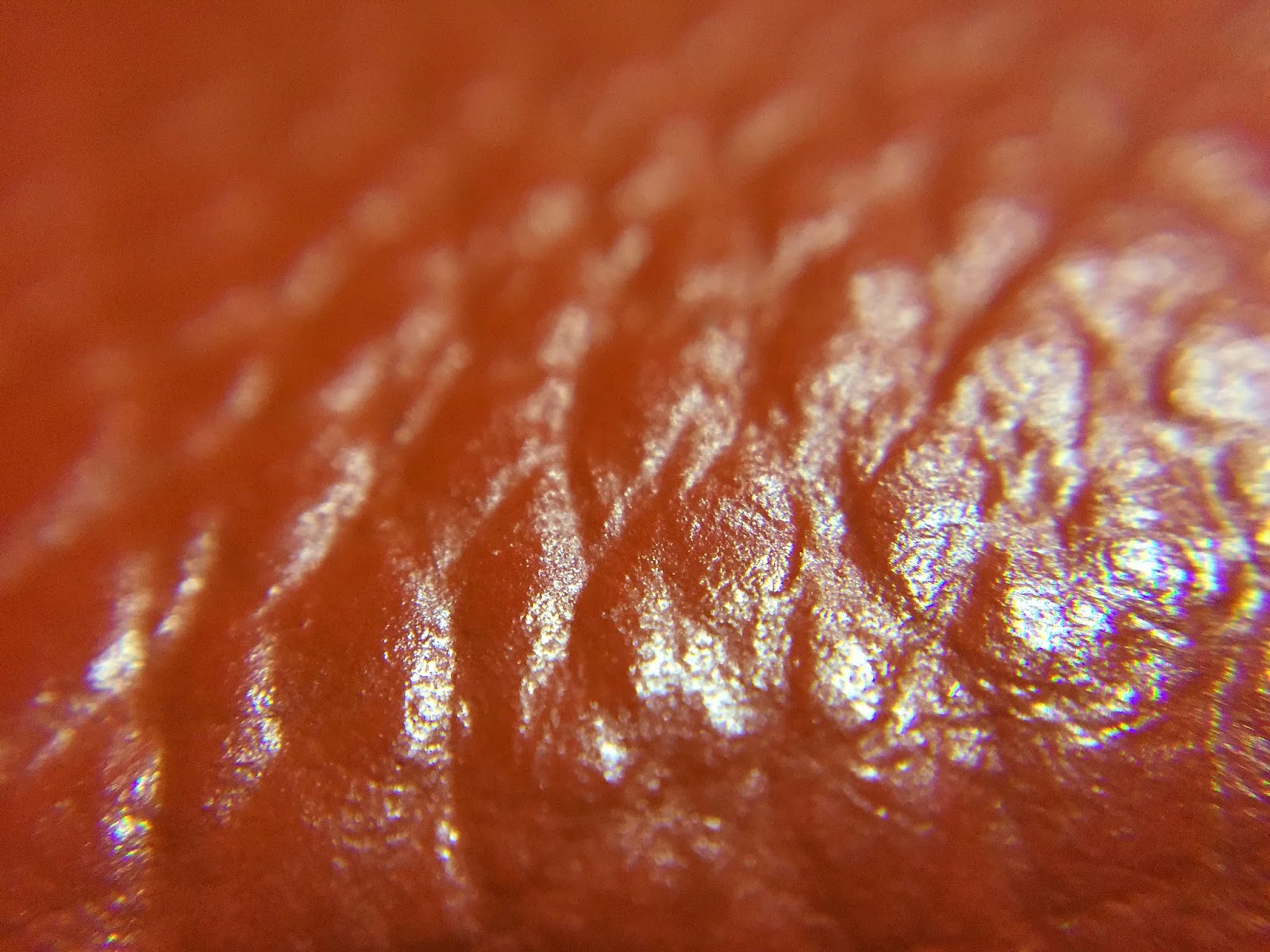

Look closely

The BRDF models surface roughness at a frequency bigger than wavelength, but smaller than observation scale.

In practice surfaces have details at many different scales, and we know that the BRDF has to change depending on the observation scale, that is for us the pixel projected footprint onto the surface.

Specular antialiasing of normal maps (from Toksvig onwards) which is very popular nowadays models exactly the fact that at a given scale the surface roughness, in this case represented by normal maps, has to be incorporated into the BRDF. The pixel footprint is automatically considered by mipmapping.

Consider now what happens if we look at a specific frequency of surface detail. If we look at it close enough, on an interval of a fraction of the frequency wavelength, the detail it will “disappear” and the surface will look smooth, determined only by the underlying material BRDF and an average normal over such interval.

If we “zoom out” a bit though, at scales circa proportional to the detail wavelength, the surface starts gets complicated. We can’t anymore represent it over such intervals as a single normal, nor it’s easy to capture such detail in a simple BRDF, we’ll need multiple lobes or more complicated expressions.

Zoom out even more and now your observation interval covers many wavelengths, and the surface properties look like they are representable again in a statistical fashion. If you’re lucky it might be in a way that is possible to capture by the same analytic BRDF of the underlying material, with a new choice of parameters. If you are less lucky, it might require a more powerful BRDF model, but still we can reasonably expect to be able to represent the surface in a simple, analytic, statistical fashion. This reasoning by the way, also applies to normalmap specular antialiasing as well...

The question is now, in practice how do surfaces look like? At what scales do they have detail? Are there scales that we don’t consider, that is, that are not something we represent in geometry and normal maps, but that are not either representable with the simple BRDF models we do use?

- Surfaces sparkle!

In games nowadays, we easily look at pixel footprints of a square millimetre or so, for example that is roughly the footprint on a character’s face when seen in a foreground full body shot, in full HD resolution.

If you look around many real world surfaces, over an integration area of a square millimetre or so, a lot of materials “sparkle”, they have either multiple BRDF lobes or very “noisy” BRDF lobes.

If you look closely at most surfaces, they won’t have smooth, clear, smooth specular highlights. Noise is everywhere, often "by design" e.g. with most formica countertops, and sometimes "naturally" even in plants, soft plastics, leather and skin notably.

Some surfaces have stronger spikes in certain directions and definitely “sparkle” visibly, even at scales we render today. Road asphalt is an easy and common example.

There are surfaces that have roughness at a frequency that is high enough to be represented always by a simple BRDF, but many are not. Some hard plastics (if not textured), glass, glazed ceramic, paper, some smooth paints.

Note: Eyes can be deceptive in this kind of investigation, for example Apple’s aluminum on a macbook or iphone looks “sparkly” in real life, but its surface roughness is probably too small to matter at the scales we render.

This opens another can or worm that I won’t discuss, of what to do with materials that people “know” should behave in a given way, but that don’t really at the resolutions we can currently render at...

|

| My Macbook Pro Retina |

- Surfaces are anisotropic!

Anisotropy is much more frequent than one might suspect, especially for metals. We tend to think that only “brushed” finishes are really anisotropic, but when you start looking around you notice that many metals shows anisotropy, due to the methods used to shape them.

Similar artifacts are sometimes found also in plastics (especially soft or laminated) or paints.

|

| On the left: matte wall paint and a detail of its texture On the right: the metal cylinder looks isotropic, but close up reveals some structure |

- Surfaces aren’t uniform!

At a higher scale, if you have to bet how any surface looks at the resolutions we author specular roughness maps, it will always somewhat be non-uniform pixel to pixel.

You can imagine how if it’s true that many surfaces have roughness at frequencies that would require complex, multi-lobe BRDFs to render at our current resolutions, how it is even more likely that our BRDFs won’t ever have the exact same behaviour pixel to pixel.

Another way of thinking of the effects of using simpler BRDFs driven by uniform parameters is that we are losing resolution. We are using a surface representation that is probably reasonable at some larger scale, per pixel at a scale at which it doesn’t apply. So it’s somewhat similar to doing to right thing with wrong, “oversized” pixel footprints. In other words, over-blurring.

- Surfaces aren’t flat!

Flat surfaces aren’t really flat. Or at least it’s rare, if you observe a reflection over smooth surfaces as it “travels” along them, not to notice subtle distortions.

Have you ever noticed the way large glass windows for example reflect? Or ceramic tiles?

This effect is at a much lower frequency of course of the ones described above, but it’s still interesting. Certain things that have to be flat in practice are, to the degree we care about in rendering (mirrors for example), but most others are not.

- Conclusions

My current interest in observing surfaces has been sparkled by the fact I’m testing (and have always been interested) hardware solutions for BRDF scanning, thus I needed a number of surface samples to play with, ideally uniform, isotropic, well-behaved. And I found it was really hard to find such materials!

How much does this matter, perceptually, and when? I’m not yet sure. As it's tricky to understand what needs more complex BRDFs and what can be reasonably modeled with rigorous authoring of materials.

|

| Textures from The Order 1886 Specular highlights are always "broken" via gloss noise, even on fairly smooth surfaces |

Some links:

- Also in the article:

- Survey of non-linear prefiltering

- Discrete stochastic microfacet models (check out the video as well)

- Debevec's facial microgeometry and Jorge's realtime approximation

- Rendering glints on high-resolution normal-mapped specular surfaces

- Further links:

Subscribe to:

Posts (Atom)

.jpg)

%2Bcopy.jpg)

%2Bcopy.jpg)