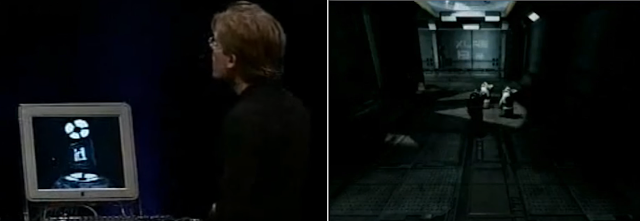

"The big trick we are getting now is the final unification of lighting and shadowing across all surfaces in a game - games had to do these hacks and tricks for years now where we do different things for characters and different things for environments and different things for lights that move versus static lights, and now we are able to do all of that the same way for everything..."

Who said this?

Jensen Huang, presenting NVidia's RTX?

Not quite... John Carmack. In 2001, at Tokyo's MacWorld, showing Doom 3 for the first time. It was though on an NVidia hardware, just a bit less powerful than today's 20xx/30xx series. A GeForce 3.

|

| Can watch the recording on YouTube for a bit of nostalgia. |

And of course, the unifying technology at that time was stencil shadows - yes, we were at a time before shadowmaps were viable.

Now. I am not a fan of making long-term predictions, in fact, I believe there is a given time horizon after which things are mostly dominated by chaos, and it's just silly to talk about what's going to happen then.

But if we wanted to make predictions, a good starting point is to look at the history, as history tends to repeat. What happened last time that we had significant innovation in rendering hardware?

Did compute shaders lead to simpler rendering engines, or more complex? What happened when we introduced programmable fragment shaders? Simpler, or more complex? What about hardware vertex shaders - a.k.a. hardware transform and lighting...

And so on and so forth, we can go all the way back to the first popular accelerated video card for the consumer market, the 3dfx.

|

| Memories... A 3dfx Voodoo. PCem has some emulation for these, if one wants to play... |

Surely it must have made things simpler, not having to program software rasterizers specifically for each game, for each kind of object, for each CPU even! No more assembly. No more self-modifying code, s-buffers, software clipping, BSPs...

No more crazy tricks to get textures on screen, we suddenly got it all done for us, for free! Z-buffer, anisotropic filtering, perspective correction... Crazy stuff we never could even dream of is now in hardware.

Imagine that - overnight you could have taken the bulk of your 3d engine and deleted it. Did it make engines simpler, or more complex?

Our shaders today, powered by incredible hardware, are much more code, and much more complexity, than the software rasterizers of decades ago!

Are there reasons to believe this time it will be any different?

Spoiler alert: no.

At least not in AAA real-time rendering. Complexity has nothing to do with technologies.

Technologies can enable new products, true, but even the existence of new products is always about people first and foremost.

The truth is that our real-time rendering engines could have been dirt-simple ten years ago, there's nothing inherently complex in what we got right now.

Getting from zero to a reasonable, real-time PBR renderer is not hard. The equations are there, just render one light at a time, brute force shadowmaps, loop over all objects and shadows and you can get there. Use MSAA for antialiasing...

Of course, you would need to trade-off performance for such relatively "brute-force" approaches, and some quality... But it's doable, and will look reasonably good.

Even better? Just download Unreal, and hire -zero- rendering engineers. Would you not be able to ship any game your mind can imagine?

The only reason we do not... is in people and products. It's organizational, structural, not technical.

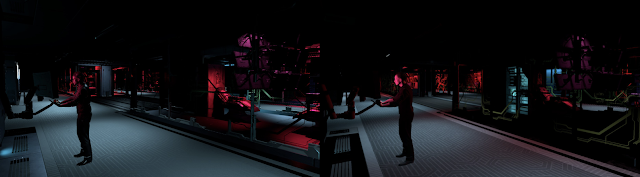

We like our graphics to be cutting edge as graphics and performance still sell games, sell consoles, are talked about.

And it's relatively inexpensive, in the grand scheme of things - rendering engineers are a small fraction of the engineering effort which in turn is not the most expensive part of making AAA games...

|

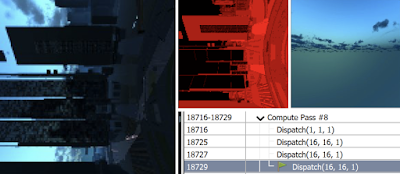

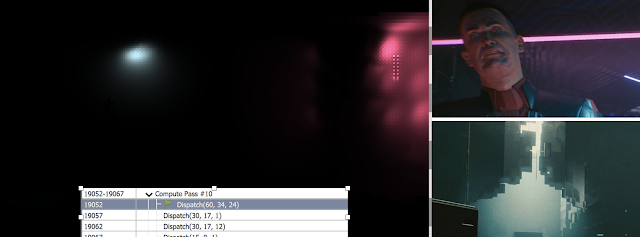

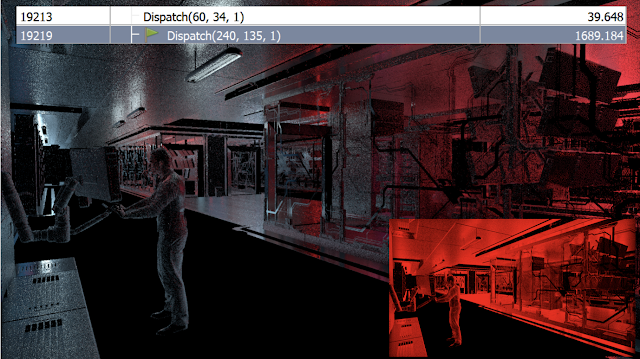

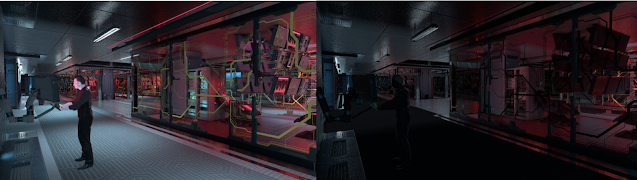

| So pretty... Look at that sky. Worth its complexity, right? |

In AAA is perfectly ok to have someone work for say, a month, producing new, complicated code paths to save say, one millisecond in our frame time. It's perfectly ok often to spend a month to save a tenth of a millisecond!

Until this equation will be true, we will always sacrifice engineering, and thus, accept bigger and bigger engines, more complex rendering techniques, in order to have larger, more beautiful worlds, rendered faster!

It has nothing to do with hardware nor it has anything to do with the inherent complexity of photorealistic graphics.

We write code because we're not in the business of making disruptive new games, AAA is not where risks are taken, it's where blockbuster productions are made.

It's the nature of what we do, we don't run scrappy experimental teams, but machines with dozens of engineers and hundreds of artists. We're not trying to make the next Fortnite - that would require entirely different attitudes and methodologies.

And so, engineers gonna engineer, if you have a dozen rendering people on a game, its rendering will never be trivial - and once that's a thing that people do in the industry, it's hard not to do it, you have to keep competing on every dimension if you want to be at the top of the game.

The cyclic nature of innovation.

Another point of view, useful to make some prediction, comes from the classic works of Clayton Christensen on innovation. These are also mandatory reads if you want to understand the natural flow of innovation, from disruptive inventions to established markets.

One of the phenomena that Christensen observes is that technologies evolve in cycles of commoditization, bringing costs down and scaling, and de-commoditization, leveraging integrated, proprietary stacks to deliver innovation.

In AAA games, rendering has not been commoditized, and the trend does not seem going towards commoditization yet.

Innovation is still the driving force behind real-time graphics, not scale of production, even if we have been saying for years, perhaps decades that we were at the tipping point, in practice we never seemed to reach it.

We are not even, at least in the big titles, close to the point where production efficiency for artists and assets are really the focus.

It's crazy to say, but still today our rendering teams typically dwarf the efforts put into tooling and asset production efficiency.

We live in a world where it's imperative for most AAA titles to produce content at a steady pace. Yet, we don't see this percolating in the technology stack, look at the actual engines (if you have experience of them), look at the talks and presentations at conferences. We are still focusing on features, quality and performance more than anything else.

We do not like to accept tradeoffs on our stacks, we run on tightly integrated technologies because we like the idea of customizing them to the game specifics - i.e. we have not embraced open standards that would allow for components in our production stacks to be shared and exchanged.

|

| Alita - rendered with Weta's proprietary (and RenderMan-compatible) Manuka |

I do not think this trend will change, at the top end, for the next decade or so at least, the only time horizon I would even care to make predictions.

I think we will see a focus on efficiency of the artist tooling, this shift in attention is already underway - but engines themselves will only keep growing in complexity - same for rendering overall.

We see just recently, in the movie industry (which is another decent way of "predicting" the future of real-time) that production pipelines are becoming somewhat standardized around common interchange formats.

For the top studios, rendering itself is not, with most big ones running on their own proprietary path-tracing solutions...

So, is it all pain? And it will always be?

No, not at all!

We live in a fantastic world full of opportunities for everyone. There is definitely a lot of real-time rendering that has been completely commoditized and abstracted.

People can create incredible graphics without knowing anything at all of how things work underneath, and this is definitely something incredibly new and exciting.

Once upon a time, you had to be John friggin' Carmack (and we went full circle...) to make a 3d engine, create Doom, and be legendary because of it. Your hardcore ability of pushing pixels made entire game genres that were impossible to create without the very best of technical skills.

Today? I believe a FPS templates ships for free with Unity, you can download Unreal with its source code for free, you have Godot... All products that invest in art efficiency and ease of use first and foremost.

Everyone can create any game genre with little complexity, without caring about technology - the complicated stuff is only there for cutting-edge "blockbuster" titles where bespoke engines matter, and only to some better features (e.g. fidelity, performance etc), not to fundamentally enable the game to exist...

And that's already professional stuff - we can do much better!

Three.js is the most popular 3d engine on github - you don't need to know anything about 3d graphics to start creating. We have Roblox, Dreams, Minecraft and Fortnite Creative. We have Notch, for real-time motion graphics...

Computer graphics has never been simpler, and at the same time, at the top end, never been more complex.

|

| Roblox creations are completely tech-agnostic. |

Conclusions

AAA will stay AAA - and for the foreseeable future it will keep being wonderfully complicated.

Slowly we will invest more in productivity for artists and asset production - as it really matters for games - but it's not a fast process.

It's probably easier for AAA to become relatively irrelevant (compared to the overall market size - that expands faster in other directions than in the established AAA one) - than for it to radically embrace change.

Other products and other markets is where real-time rendering is commoditized and radically different. It -is- already, all these products already exist, and we already have huge market segments that do not need to bother at all with technical details. And the quality and scope of these games grows year after year.

This market was facilitated by the fact that we have 3d hardware acceleration pretty much in any device now - but at the same time new hardware is not going to change any of that.

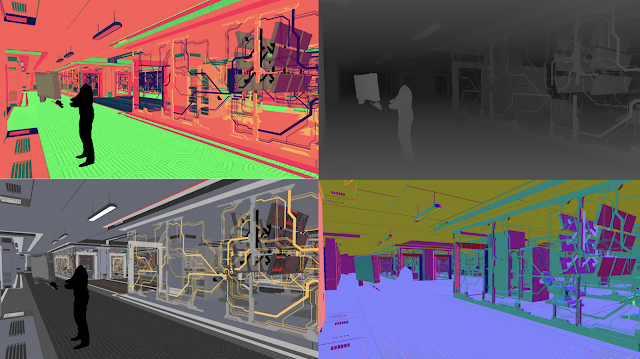

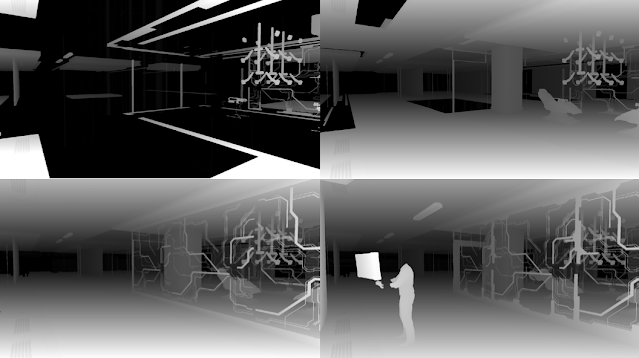

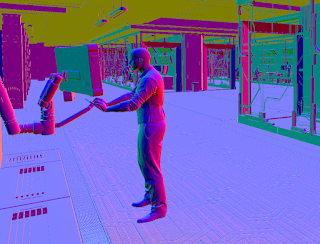

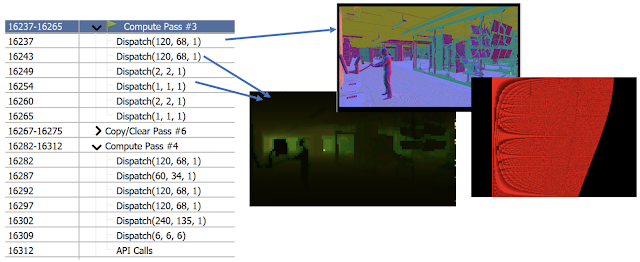

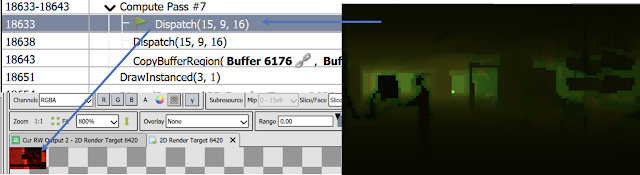

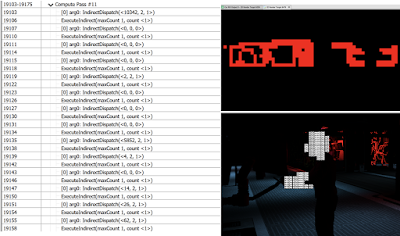

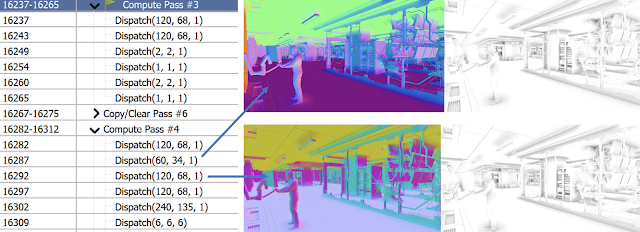

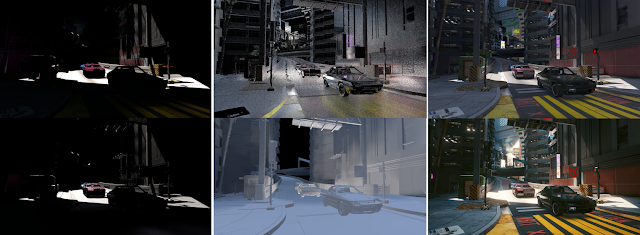

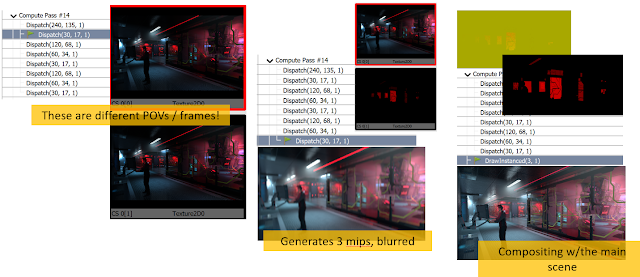

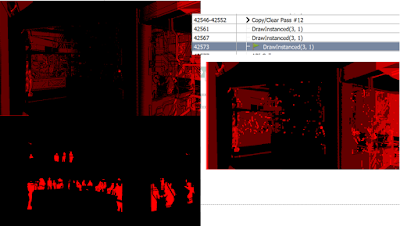

Raytracing will only -add- complexity at the top end. It might make certain problems simpler, perhaps (note - right now people seem to underestimate how hard is to make good RT-shadows or even worse, RT-reflections, which are truly hard...), but it will also make the overall effort to produce a AAA frame bigger, not smaller - like all technologies before it.

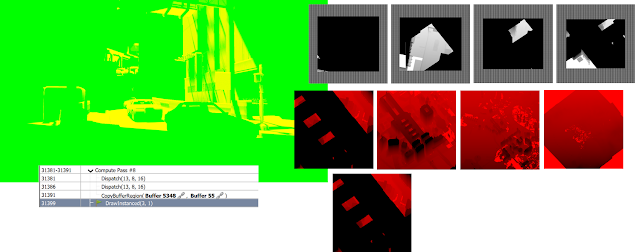

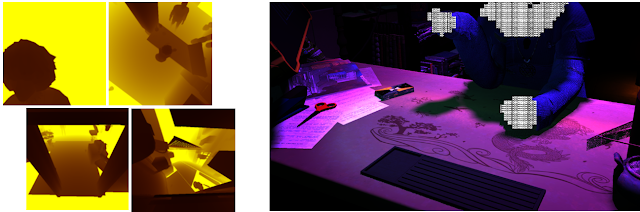

We'll see incredible hybrid techniques, and if we have today dozens of ways of doing shadows and combining signals to solve the rendering equation in real-time, we'll only grow these more complex - and wonderful, in the future.

Raytracing will eventually percolate to the non-AAA eventually too, as all technologies do.

But that won't change complexity or open new products there either because people who are making real-time graphics with higher-level tools already don't have to care about the technology that drives them - technology there will always evolve under the hood, never to be seen by the users...